Effects of a Novelty Virtual Interactive Brain Atlas on Student Perception of Neuroanatomy

Abstract

The instructional techniques in neuroanatomy laboratories continue to evolve to incorporate online interactive resources to improve student experience and outcomes. This study aims to design an “all in one”Virtual Interactive Brain Atlas (VIBA) that provides students with an educational resource that will improve their knowledge of neuroanatomy while in the brain lab and provide them with lab resources they can self-study and self-test. Coronal, midsagittal, whole brain, and horizontal brain slices were used to create detailed descriptions, interactive features, and quiz assessments to create VIBA. Upper level undergraduate and optometry students taking a neuroanatomy one-semester course were provided with VIBA for use during the semester. A paper survey was distributed after completing the course to determine student perception. No significant difference was indicated between the student groups regarding their self-reported understanding prior to the brain lab (p= 0.194) and after the brain lab (p= 0.308). There was a significant difference between the student populations when stating that they strongly agreed that the online brain atlas improved their understanding of neuroanatomy (p= 0.032) and that the VIBA tool was easy to navigate (p=0.048). There was a significant difference between the two student groups that strongly agreed that the online brain atlas quality was sufficient (p= 0.015). This online interactive brain atlas was created in a time-efficient manner from readily available models and was well received by experienced neuroanatomy faculty and students.

Author Contributions

Academic Editor: R. Clinton Webb, Cell Biology and Anatomy School of Medicine Columbia.

Checked for plagiarism: Yes

Review by: Single-blind

Copyright © 2024 Davies H.C, et al

This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

Competing interests

The authors declare no conflict of interest.

Citation:

Introduction

Current approaches to teaching anatomy vary, making it difficult to determine the best method for students' learning. Human donor-based dissection has been regarded as the core instructional method in the gross anatomy curriculum for decades. Many studies have researched the benefits of anatomical dissections, stating that dissection leads to many advantages, such as an appreciation of whole-body pathology, an understanding of variations in anatomy, enhanced hand surgical skills, and an improved understanding of the ethical and moral ideals in medicine 1, 2, 3.

A specific subset of anatomy, neuroanatomy, is of particular difficulty for students across all disciplines and levels 4. Students find that visualizing the structures, the amount of information, and the decreased time spent in the gross laboratory involved in learning neuroanatomy is particularly challenging compared to other anatomical topics 5. Additionally, the complexity of the three-dimensional structure of the brain makes learning neuroanatomy even more complex, challenging students to think about the depths and spatial relationships 6. Many schools are reducing laboratory hours for teaching neuroanatomy 7, because of limited availability of brain specimens 8, 9, 10, 11, 12, and a reduction in qualified faculty 13, 14, 15, 16, 17. Further complicating the teaching of neuroanatomy is the increased anxiety, disinterest, and dislike students have around the subject 18, 19, 20.

To approach these challenges, educators have used different methods such as digital learning resources. Students benefit from having optional digital learning anatomy resources in addition to their traditional lecture-lab-based anatomy courses as these are founded on different teaching approaches/characteristics essential for practical and theoretical skills 21, 22, 23, 24. Studies have shown in-person instruction through didactics and dissections is still superior and more essential compared to digital-only instruction 25, 26. However, students have shown increased benefit from having digital learning anatomy resources in addition to their traditional lecture-lab-based anatomy courses 21, 22, 23. This suggests a multimodal approach, using both in-person experience and online resources, to be a more well-rounded approach 25, 26, 27.

Additionally, repeated testing has been shown to facilitate students' learning and aid in enhancing students' retention of human anatomy 28. Assessments can range from quizzes, lab practicals, presentations, written assessments, etc. Regardless of the type of assessment, testing increases a student's understanding of the material, students' confidence in their learning, and better self-evaluation of their understanding of the material 25, 26, 27. However, neuroanatomy tends to lack practical assessments and alternative learning tools (such as online tools) 29.

To aid students with a better understanding of neuroanatomy, we developed a comprehensive online learning tool called the VIBA that would facilitate gross lab and at-home learning, by not only enabling them to view brain sections outside the lab, but most importantly providing students with self-testing tools. We hypothesize that the integration of the newly developed tool, VIBA, into neuroanatomy courses would benefit student learning by providing a supplemental study resource they can use for neuroanatomy both in laboratories and at home.

Experimental Procedure

Development of the Virtual Interactive Brain Atlas (VIBA) Study Tool

Image Acquisition

Plastinated brains (Von Hagans Inc, Guben, Germany) in coronal, horizontal, midsagittal, and whole brain sections were imaged to create the atlas. Four coronal sections were imaged, both rostrally and caudally, totaling eight images.

1. Six horizontal sections were imaged, with three being imaged both superiorly and inferiorly and the remaining three being imaged only from one view, either superiorly or inferiorly, totaling nine images.

2. One midsagittal right-sided section was imaged both medially and laterally, totaling two images.

3. One whole brain was imaged, both ventrally and laterally, with the lateral view being imaged on the left side, totaling two images.

Overall, there are twenty-one total images taken for the study tool.

Tool Development

Images were uploaded to Microsoft PowerPoint (Microsoft Corporation, Redmond, Washington, United States), and action buttons were integrated into the tool, allowing for interactive features.

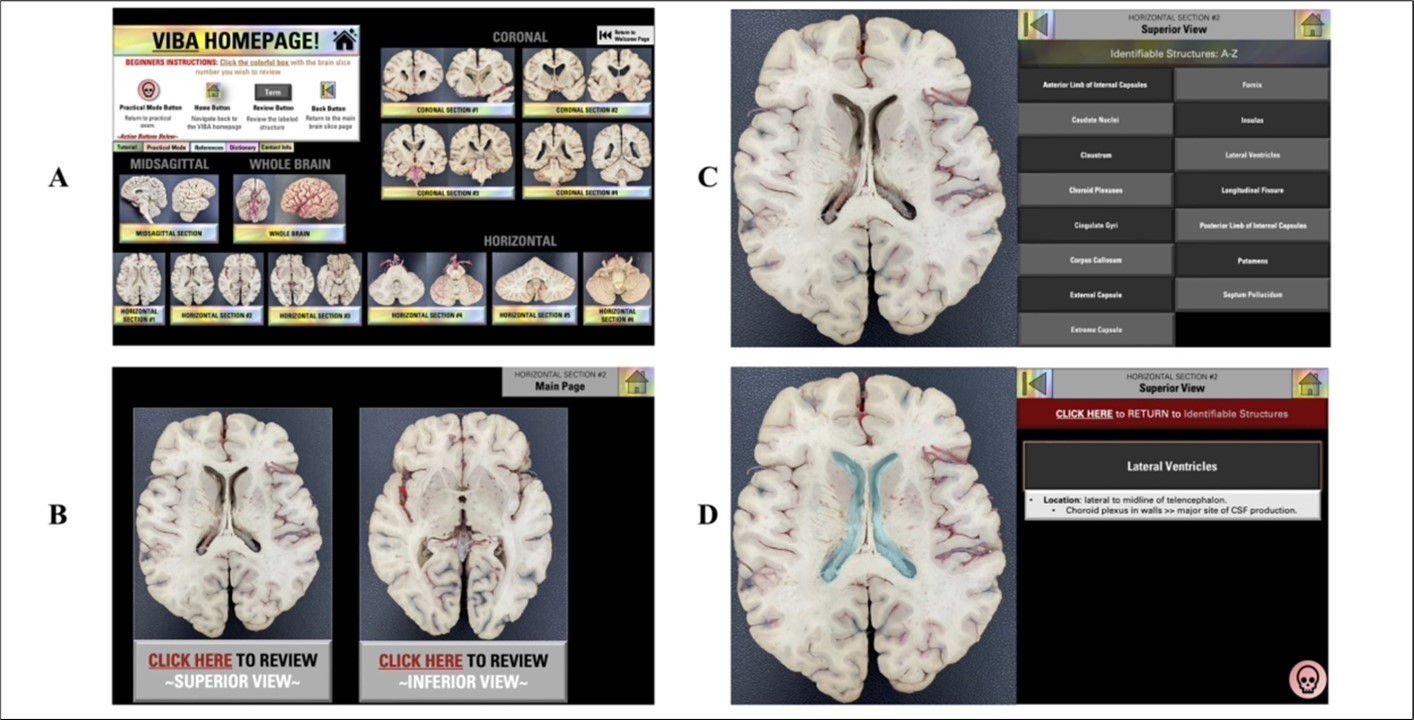

The VIBA study consisted of two "homepages," one for review mode (Figure 1A) and one for practical mode (Figure 2A). On the review mode homepage (Figure 1A), users had access to beginners' instructions, additional action buttons, and a variety of brain sections. Also, note that some of the brain sections have only one view while others have two.

In order to access the VIBA interactive feature, users had to be in slide show mode. Next, to begin reviewing, users were able to click on the colorful boxes located on the review mode homepage (Figure 1A) with the corresponding brain section they wished to review.

By clicking the colorful box, the user was redirected to the main page of that section (Figure 1B). The user could then choose the view they wish to review and, when clicked on, would be taken to a list of identifiable structures for review (Figure 1C). Users could then choose the term they wish to review and, when clicked on, would be navigated to a page that included the structure highlighted and a definition provided (Figure 1D).

Figure 1.VIBA Plastinated Brain Review Mode: Homepage. (A) Review Mode Homepage. (B) The main page of the brain section. (C) List of identifiable terms for brain section. (D) Reviewed structure.

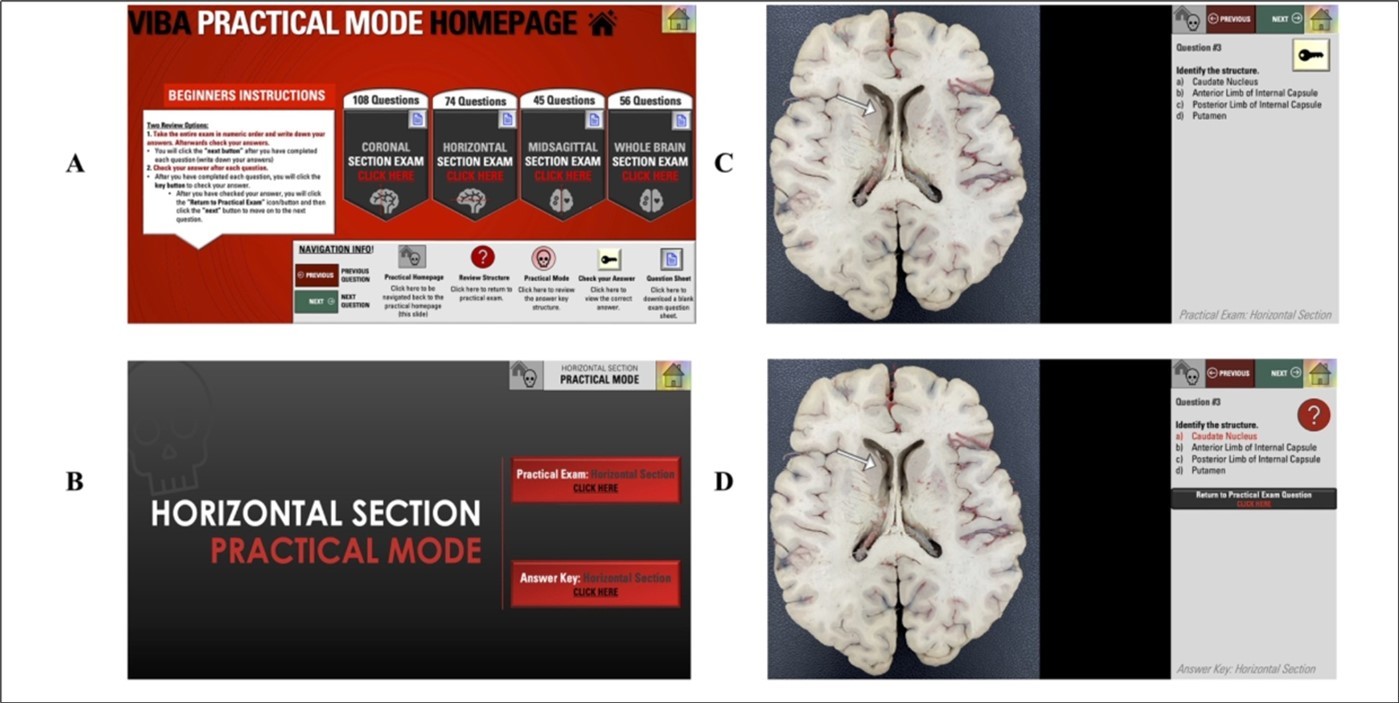

On the practical mode homepage (Figure 2A), users were provided with a total of one hundred and eight coronal section exam questions, seventy-four horizontal exam questions, forty-six midsagittal section exam questions, and fifty-six whole brain exam questions. Once selected, users would be navigated to a section page (Figure 2B), allowing them to either begin the exam or view the answer key. Once users launched the exam (Figure 2C), they had two review options. Option 1 involved taking the entire exam in numeric order, keeping track of their answers on paper. Afterward, users would have the opportunity to check their answers. Option 2 allowed users to check their answers after each question by clicking the “key” icon (Figure 2D). Users could also click the "question mark" icon next to the answer key to return to the structure in the review mode section (Figure 1D). After reviewing, users could then click the skull icon in the bottom right to return to the answer key (Figure 2D). Users could then click "Return to Practical Exam Review” and move on to the next question.

Figure 2.VIBA Plastinated Brain Practical Mode. (A) Practical Mode Homepage. (B) The main page of the practical mode section. (C) Question. (D) Answer Key

Overall, the process of creating the interactive brain atlas took approximately six months to develop, create, and revise. Revisions included informal analysis from instructors.

Methods and Materials

Operational Definition of Terms

Faculty – full time anatomy and physiology professors employed by Department of Cell, Developmental and Integrative Biology, School of Medicine, University of Alabama at Birmingham.

Students – for the pilot study – students enrolled in the Masters of Anatomical Sciences at the University of Alabama at Birmingham.

Students – participating in the survey described in manuscript – first-year optometry students enrolled in Neuroscience course and undergraduate students (juniors and seniors) enrolled in Neuroscience course.

Faculty Pilot Study

An institution-based pilot study was conducted prior to the implementation of the tool into the curriculum and given to anatomy instructors and students in the Masters of Anatomical Sciences at the University of Alabama at Birmingham (UAB). Both faculty and students were given the study tool and asked to review the structures, functions, and practice questions for its accuracy, effectiveness, and ease of navigation. Several weeks were given to assess the tool, and positive results were obtained from a majority argument stating that the tool would be beneficial in the curriculum and aid as a supplemental neuroanatomy study resource for students.

The Tool's Novel Aspects

The tool was created using plastinated brain specimens located at UAB. In addition, the interactive features and toggle function between practice questions make it unique compared to other neuroanatomy tools. The practice questions embedded in the tool were designed to align with the UAB curriculum and represent questions/structure identifications that students will see when they take their lab assessment. Moreover, the structures, definitions, and practice questions were created and reviewed by anatomy instructors at our institution, validating the tool's accuracy and ability to be effective in supporting students' knowledge. Anatomy faculty and students confirm that there is no other tool made by our institution that aligns better with the neuroanatomy curriculum than VIBA. In addition, copyright was obtained after the tool's creation (Intellectual Property Disclosure Number: U23-055–Apr 5, 2023).

Distribution of the VIBA Tool

Two populations of students were assessed: first-year optometry students in a Neuroscience course and undergraduate students enrolled in a Neuroscience course tailored to their respective levels. Both courses incorporate a balanced basic science view of the structure and function of the nervous system to prepare and provide them with working knowledge of the structure and function of the nervous system with perspectives that range from molecular to behavioral. In addition, both courses consist of interactive didactic sessions and real brain lab experiences exposing students to the various regions of the brain. Both courses have a nearly identical brain structure list requiring students to find and review various anatomical features/structures in the human brain.

The VIBA study tool was integrated into the undergraduate and optometry neuroanatomy course curriculum. Students were given 24/7 access to the brain atlas for the length of their semester course. Students could access the study tool both in class and outside of the classroom.

Student Perception Survey Distribution

The survey included Likert scale items, multiple-choice style questions, and open-ended questions. The Likert scale items measured participants' perceptions and experiences related to the study tool's effectiveness. The first set of Likert scale items ranged from 0 (none) to 4 (excellent), and the second set of Likert items ranged from 0 (strongly disagree) to 4 (strongly agree). The multiple-choice style questions asked if participants used the study tool outside of the lab with answer options including Yes, No, and Maybe. The open-ended item asked students to provide any additional comments regarding their experience with the online brain atlas tool. The survey was in paper format and distributed at the end of the student's semester. All students' responses were anonymous and kept in a locked room with responses entered on a password-protected computer of the researchers.

Data Analysis

Data was initially uploaded to Microsoft Excel (Microsoft Corporation, Redmond, WA) for storage and cleaning. Data was then transferred to SPSS, version 29 (IBM Corp., Redmond, NY). Likert scale responses are reported as mean ± standard deviation. Significant differences in optometry and undergraduate student perceptions were determined by an independent sample t-test. Statistical significance was set at p < 0.05. Cronbach's alpha was calculated to assess the internal consistency and reliability of the survey instrument.

For free responses, a thematic analysis was conducted. Themes were determined and assigned to comments submitted by students via three independent investigators. Differences in themes were discussed amongst investigators and were resolved. Themes were reported as overall number (n) and as a percentage (n/student responses*100).

Ethical Considerations

This study was granted exempt status (IRB #300010425) by the Institutional Review Board of the University of Alabama at Birmingham (UAB).

Results

To analyze the effectiveness of the VIBA study tool, a post-survey was conducted on a total of 67 participants: 44 optometry students and 23 undergraduate students. All students from each course participated in the survey. Participants were asked to describe their perception on a Likert scale (Cronbach’s alpha = 0.595).

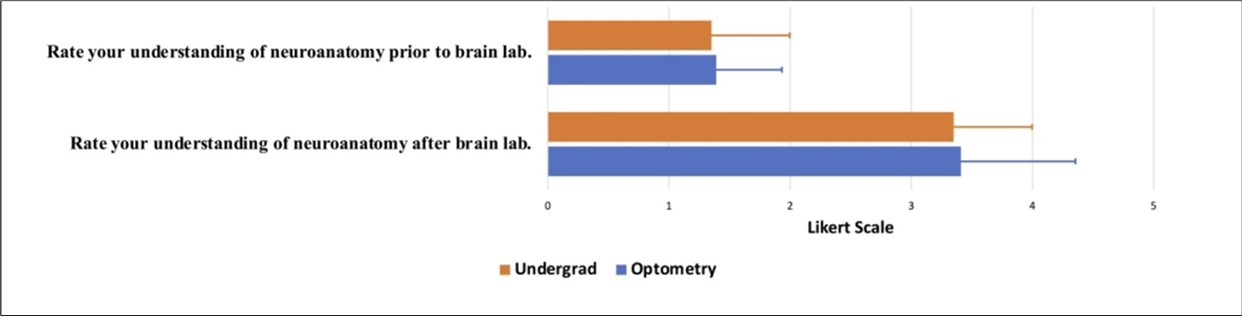

Students were asked to rate their understanding of neuroanatomy prior to and after the brain lab using a Likert scale ranging from 0 (none) to 4 (excellent). The majority of optometry students rated their level of understanding of neuroanatomy prior to the brain lab as poor (24 out of 44, 54.5%). The majority of undergraduate students rated their level of understanding of neuroanatomy prior to the brain lab as poor (11 out of 23, 47.8%) or average (10 out of 23, 43.5%). Overall, participants felt their knowledge of neuroanatomy prior to teaching in the lab was not adequate for both undergraduate (1.35 ± 0.647) and optometry (1.39 ± 0.945) students (Figure 3). No significant differences were seen in compared responses for optometry and undergraduate students when rating their understanding of neuroanatomy prior to the brain lab (p = 0.194).

In comparison, most optometry students rated their understanding of neuroanatomy after the brain lab as good (24 out of 44, 54.5%) and excellent (19 out of 44, 43.2%). The majority of undergraduate students rated their level of understanding of neuroanatomy after the brain lab as good (11 out of 23, 47.8%) or excellent (10 out of 23, 43.5%). Compared to prior brain labs, many students felt their knowledge of neuroanatomy was sufficient for both undergraduate (3.35 ± 0.647) and optometry (3.41 ± 0.542) students (Figure 3). No significant differences were found between groups (p = 0.308).

Figure 3.Post-course survey on participants' understanding of neuroanatomy prior to and after brain lab. The 5-point Likert scale is as follows: 0 = none, 1 = poor, 2 = average, 3 = good, and 4 = excellent. Results from the rating prior to the brain lab: Undergrad (orange) 1.35 ± 0.647, Optometry (blue) 1.39 ± 0.945. P-value= 0.194. Results from the rating after brain lab: Undergrad (orange) 3.35 ± 0.647 Optometry (blue) 3.41 ± 0.542. P-value= 0.308. Data is shown as Mean ± SD.

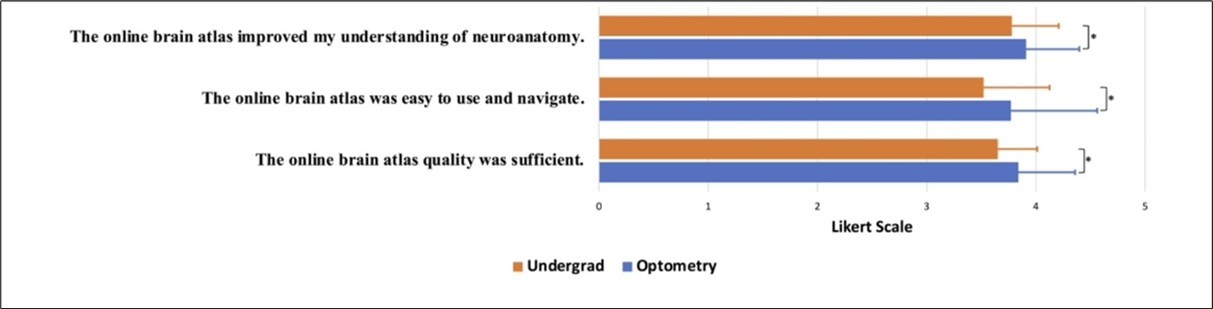

Students were asked to rate a series of Likert scale questions ranging from 0 (strongly disagree) to 4 (strongly agree). A large majority of students felt that the online brain atlas improved their understanding of neuroanatomy for optometry (3.78 ± 0.518) and undergraduate (3.91 ± 0.362). A significant difference was found between groups (p = 0.032), with 93.2% (41 out of 44) of optometry students and 82.6% (19 out of 23) of undergraduate students stating that they strongly agreed that the online brain atlas improved their understanding of neuroanatomy. A significant difference was found between groups when students were asked to rate if the online brain atlas was easy to navigate (p = 0.048), with 84.1% (37 out of 44) of optometry students and 65.2% (15 out of 23) of undergraduate students strongly agreeing that the VIBA study tool was easy to navigate. Overall, optometry students more strongly agreed (3.77 ± 0.605) than undergraduate students (3.52 ± 0.790). A similar significant difference was found (p = 0.015) when students were asked to rate the quality of the online atlas, with 86.4% (38 out of 44) of optometry students and 65.2% (15 out of 23) of undergraduate students stating that they strongly agreed that the online brain atlas quality was sufficient. Overall, they strongly agreed more (3.84 ± 0.428) than undergraduate students (3.65 ± 0.487) (Figure 4). Next, participants were asked if they used the brain atlas to self-study at home. Significantly more undergraduate students (95.7%, 22 out of 23) reported using VIBA to self-study at home, compared to the optometry students (90.9%, 40 out of 44; p = 0.020) Table 1.

Figure 4.Post-course survey on participants' experience. The 5-point Likert scale is as follows: 0 = strongly disagree, 1 = somewhat agree, 2 = neither agree or disagree, 3 = somewhat agree, and 4 = strongly agree. Results from the Likert rating of the online brain atlas improved my understanding of neuroanatomy. Undergrad (orange) 3.78 ± 0.518, Optometry (blue) 3.91± 0.362. P-value= 0.032. Results from the Likert rating of the online brain atlas were easy to use and navigate: Undergrad (orange) 3.52 ± 0.790, Optometry (blue) 3.77 ± 0.605. P-value= 0.048. Results from the Likert rating of the online brain atlas quality were sufficient: Undergrad (orange) 3.65 ± 0.0.487, Optometry (blue) 3.84 ± 0.428. P-value= 0.015. Data is shown as Mean ± SD.

| Question | Optometry n (%= n/44) | Undergraduate n (%= n/23) | P-Value |

| Q3. Did you use the online brain atlas tool to self study at home? | 0 | ||

| 1- Yes | 40 (90.9%) | 22 (95.7%) | |

| 2- Maybe | 0 (0.00)% | 1 (4.3%) | |

| 3- No | 4 (9.1%) | 0 (0.0 %) | |

Participants were asked to provide any additional comments regarding their experience with VIBA on free-response questions. The majority of the participants found the brain atlas to be helpful and beneficial to their learning. In addition, many participants found the study tool easy to navigate. Specifically, a few described their appreciation for having a study tool accessible outside the brain lab. While most students did not have elements/features of the tool they particularly did not enjoy, some suggested the need for more information and brain sections to be incorporated into the study tool. Please refer to Table 2 for a summary of these results.

Table 2. Summary of additional participant’s comments/suggestions.| Q4. Please provide any additional comments in the space below regarding your experience with the online brain atlas tool. | Optometryn (%= n/37) | Selectedcomments(Optometry) | Undergraduaten (%= n/15) | Selectedcomments(Undergraduate) |

| Helpful/useful for studying/learning. | 20 (54.1%) | “It was extremely helpful to have this resource for studying.” | 12 (80%) | “The online brain atlas was extremely helpful in solidifying the neuroanatomy that was learned in the lab.” |

| Easy to navigate | 7 (18.9%) | “VIBA made neuroanatomy extremely easy to learn! “ | 2 (13.3%) | “Easy to navigate and pictures matched what was asked in labs. So good/almost doesn't need instructions for how to navigate..” |

| Suggestion of adding more brain sections/more detail added | 0 (0.0) | NA | 4 (26.7%) | “I would appreciate further details about the functions of the brain structures.” |

| Having access outside of the lab. | 2 (5.4%) | “VIBA was an excellent tool to study the different parts of the brain following the lab and prior to exams.” | 1 (6.7%) | “My favorite part about this tool is that we didn't have to be in the lab to improve our understanding” |

Discussion

Our study aimed to create an online interactive brain database to facilitate students' learning in neuroanatomy courses. Results show that students perceived VIBA as a successful teaching tool that facilitated the efficacy of their neuroanatomy learning process, regardless of learning level. Responses also showed that students appreciated the comprehensive nature of the resource and how the atlas was tailored to our students' curriculum. In addition, our brain atlas contained a dictionary that allowed students to review terms in the same resource they were reviewing structures, which was a significant factor in the tool's development since students often prefer an "all-in-one" study resource 29.

The success of VIBA could be explained by a variety of components. While our tool provided the majority of the structures listed in the students' course syllabus, some structures could not be obtained due to the lack of plastinated brains available for use, which may have led to comments regarding the need for more structures and details in the brain atlas (Table 2). The human brain is unique per individual, and if students rely solely on our study tool as their resource for learning, their perception of the human brain may be misinformed into thinking that all brain slices are symmetrical and resemble one another. The concept of variability seen in anatomical structures deepens students' understanding of the human body and its way of functioning 31, 32.

Moreover, the high-quality images along with the details of the slices chosen could have contributed to the tool's success, allowing students to visualize an extensive amount of structures per slice and supporting the increased quality of the tool. Often, in the gross anatomy brain lab, students have to look through multiple brain slices to find the exact structure they are reviewing. With VIBA, students can find, if not every structure needed, to review per slice. However, limitations exist within this because students are unable to see variations.

Next, a potential success factor could have been the ability of students to answer practice questions on each structure. Studies have found that students' overall scores on exams increase when practice questions are available. Having your knowledge tested also allows students to see gaps in concepts prior to a formal assessment 33, 34, 35. Students are able to focus more of their attention on improving their understanding of the concepts/topics by using these proactive approaches. Moreover, incorporating more practice assessments into students' learning can lead to higher examination success and retention of the material.

In addition, the interactive features embedded into the PowerPoint tool may have contributed to the success and enhancement of students' learning. Interactive learning resources positively correlate with student engagement and promote an active learning environment 36. A virtual interactive model-based study tool creates a personalized learning experience and deviates students away from passively studying 37. The positive impact on student retention with interactive learning resources has been shown to lead to enhanced learning 38. In addition, more diverse needs are met when interactive learning is incorporated by catering to different learning styles. Additionally, interactive features lead to immediate feedback and real-time reinforcement of learning.

Lastly, a potential reason for the tool's effectiveness on students' positive perception and understanding of neuroanatomy could have been the ability for students to use it at home. 24/7 access to a resource allows students to review the material at their convenience. Giving students the option to review material at the time that best supports their learning has been shown to increase students' satisfaction 39, 40, 41. The flexibility of the use of the tool allows students with a variety of schedules and outside commitments to benefit. Furthermore, long-term retention and consistent habits can be prompted by continuous access to supplemental learning resources.

Limitations

Although our online study tool was perceived as useful and effective in students' learning of neuroanatomy, our study did have limitations. Limitations include, but are not limited to, creating icons that make the brain slices easily viewable and incorporating the necessary identifiable structure terms without decreasing the visibility quality of the product. The VIBA tool is a large file size, leading to potential limitations. In addition, the software we used for VIBA does not have the capacity to track student usage. Next, while Microsoft PowerPoint software was sufficient for our current VIBA version, adding a vast amount of imaging would be beneficial to students but could potentially decrease the speed of the software, resulting in lower user satisfaction. Limitations regarding data collection include, but are not limited to, having a small sample size, comparing two student populations of different a cademic levels, not assessing the difference across more demographic factors, and not comparing grades to pre/post-VIBA use. Finally, the survey questionnaire's brief nature could have prevented important information from being completely captured.

Future Directions

In order to provide additional imaging, an MRI component has been approved and will be incorporated into the VIBA study tool using a single individual's MRI T1-weighted scans. The individual is unidentified and consented to be used in the study tool. The goal is to use MRIcron, a cross-platform NIfTI format image viewer, to scan images that closely resemble the plastinated brain slices.

Conclusion

Overall, our study confirmed that providing a newly developed interactive study tool as an additional resource for students benefits their learning of neuroanatomy. The Virtual Interactive Brain Atlas (VIBA) was a successful study tool in increasing the students' effectiveness and efficacy in neuroanatomy both in laboratories and at home. Thus, the VIBA study tool should be used in future neuroanatomy courses as an additional study tool for students.

Notes on Contributors

HUNTER C DAVIES, M.S., is a medical student at Heersink School of Medicine, University of Alabama at Birmingham. Her research interests include technology in medical education. She is also heavily involved in teaching anatomy and neuroanatomy to medical, dentistry, optometry, and biomedical sciences master students.

INGA KADISH, Ph.D., is an associate professor in the Department of Cell, Developmental and Integrative Biology, Heersink School of Medicine, University of Alabama at Birmingham. Her research interests include preclinical studies in Alzheimer’s disease and brain aging. She is also heavily involved in teaching anatomy and neuroanatomy to medical, dentistry, optometry, PhD nursing and biomedical sciences master students.

DANIELLE N EDWARDS, Ph.D., is an assistant professor in the Department of Cell, Developmental, and Integrative Biology, Heersink School of Medicine, University of Alabama at Birmingham. She teaches gross anatomy and neuroanatomy to medical, health professional and masters students. Her research interest is in technology in medical education.

JORDAN GEORGE, M.D., is a resident in the Department of Neurosurgery, University of Alabama at Birmingham. His research interests include global neurosurgery, vascular, skull base, and spine.

RONGBING XIE Ph.D., is an assistant professor at the Department of Surgery, University of Alabama at Birmingham. Her research interest includes optimizing health care delivery systems, evaluating comparative effectiveness of surgical interventions, statistics, econometrics, simulation modeling, and clinical outcomes research.

Disclosure

After the development of the VIBA study tool, the invention was disclosed to Bill l. Harbert institute for Innovation and Entrepreneurship (HIIE) and is protected under the University of Alabama at Birmingham (UAB). Copyright was given to H.C.D. and I.K. (Intellectual Property Disclosure Number: U23-055–Apr 5, 2023). However, no commercial interest in any product mentioned or concept discussed in this article.

Data availability statement

data available upon request.

Funding Statement

There is no funding.

Ethics Approval Statement

The study was given exempt status (IRB #300010425) by the Institutional Review Board of the University of Alabama (UAB).

Acknowledgements

The authors want to acknowledge the University of Alabama at Birmingham and the undergraduate and optometry students that gave their consent for this work to be carried out.

References

- 1.Korf Horst-Werner. (2008) The Dissection Course – Necessary and Indispensable for Teaching Anatomy to Medical Students.” Annals of Anatomy - Anatomischer Anzeiger. 16-22.

- 2.Lisa M Parker. (2002) Anatomical Dissection: Why Are We Cutting It Out? Dissection in Undergraduate Teaching.”. , ANZ Journal of Surgery 72(12), 910-912.

- 3.Turney B W. (2007) Anatomy in a Modern Medical Curriculum.”. , The Annals of the Royal College of Surgeons of England 89(2), 104-107.

- 4.M A Javaid, Chakraborty S, J F Cryan, Schellekens H, Toulouse A. (2017) Understanding neurophobia: Reasons behind impaired understanding and learning of neuroanatomy in cross-disciplinary healthcare students. , Anatomical Sciences Education 11(1), 81-93.

- 5.C, S M Bridges, G L Tipoe. (2021) Why is Anatomy Difficult to Learn? The Implications for Undergraduate Medical Curricula. Anatomical Sciences Education.https://doi.org/10.1002/ase.2071.

- 6.Jacquesson T, Simon É, Dauleac C, Margueron L, Robinson P et al. (2020) Stereoscopic three-dimensional visualization: interest for neuroanatomy teaching in medical school. Surgical and Radiologic Anatomy. 42(6), 719-727.

- 7.M A Sotgiu, Mazzarello V, Bandiera P, Madeddu R, Montella A et al. (2019) Neuroanatomy, the Achille’s Heel of Medical Students. A Systematic analysis of educational strategies for the teaching of neuroanatomy. , Anatomical Sciences Education 13(1), 107-116.

- 8.Amber R Comer. (2022) The Evolving Ethics of Anatomy: Dissecting an Unethical Past in Order to Prepare for a Future of Ethical Anatomical Practice.” The Anatomical Record. 305(4).

- 9.L O Dissabandara, S N Nirthanan, T K Khoo, Tedman R. (2015) Role of cadaveric dissections in modern medical curricula: a study on student perceptions. , Anatomy & Cell Biology 48(3), 205-10.

- 10.S K Ghosh. (2016) Cadaveric dissection as an educational tool for anatomical sciences in the 21st century. , Anatomical Sciences Education 10(3), 286-299.

- 11.W K Ogard. (2014) Outcomes Related to a Multimodal Human Anatomy Course With Decreased Cadaver Dissection in a Doctor of Physical Therapy Curriculum. , Journal of Physical Therapy Education 28(3), 21-26.

- 13.Bentley S, R V Hill. (2009) Objective and subjective assessment of reciprocal peer teaching in medical gross anatomy laboratory. , Anatomical Sciences Education 2(4), 143-149.

- 14.D N Edwards, E R Meyer, W S Brooks, A B Wilson. (2022) Faculty retirements will likely exacerbate the anatomy educator shortage.Anatomical Sciences Education. 16(4), 618-628.

- 15.C N Garnett, W S Brooks, Singpurwalla D, A B Wilson. (2023) Update on the state of the anatomy educator shortage.Anatomical Sciences Education. 16(6), 1118-1120.

- 16.R S McCuskey, S W Carmichael, D G Kirch. (2005) The Importance of Anatomy. in Health Professions Education and the Shortage of Qualified Educators. Academic Medicine 80(4), 351-10.

- 17.A B Wilson, A J Notebaert, A F Schaefer, B J Moxham, Stephens S. (2020) A Look at the Anatomy Educator Job Market: Anatomists Remain. in Short Supply. Anat Sci Educ. 13(1), 91-101. doi: 10.1002/ase.1895. Epub 12, 31095899.

- 18.A V Zinchuk, E P Flanagan, N J Tubridy. (2010) Attitudes of US medical trainees towards neurology education: "Neurophobia" - a global issue. , BMC Med Educ 10(49), 10-1186.

- 19.R F Jozefowicz. (1994) Neurophobia: The fear of neurology among medical students. , Archives of Neurology 51(4), 328-329.

- 20.Józefowicz R, Tarolli C. (2018) Managing Neurophobia: How can we meet the current and future needs of our students?. , Seminars in Neurology 38(04), 407-412.

- 21.E G Doubleday, V D O’Loughlin, A F Doubleday. (2011) The virtual anatomy laboratory: Usability testing to improve an online learning resource for anatomy education. , Anatomical Sciences Education 4(6), 318-326.

- 22.Rodrigo E Elizondo-Omaña. (2005) Dissection as a Teaching Tool: Past, Present, and Future.” The Anatomical Record Part B: The New Anatomist. 285, 11-15.

- 23.P J O’Byrne, Patry A, J A Carnegie. (2008) The development of interactive online learning tools for the study of Anatomy. , Medical Teacher 30(8), 260-271.

- 24.D B Topping. (2013) Gross Anatomy Videos: Student Satisfaction, Usage, and Effect on Student Performance. in a Condensed Curriculum.” Anatomical Sciences Education 7(4), 273-279.

- 25.Antonopoulos I, Pechlivanidou E, Piagkou M, Panagouli E, Chrysikos. (2022) Students’ perspective on the interactive online anatomy labs during the COVID-19 pandemic. Surgical and Radiologic Anatomy. 44(8), 1193-1199.

- 26.Mathiowetz V, C H Yu, Quake-Rapp C. (2015) Comparison of a gross anatomy laboratory to online anatomy software for teaching anatomy. , Anatomical Sciences Education 9(1), 52-59.

- 27.Ae Memudu, I C Anna, M Oluwatosin Gabriel, Oviosun A, W B Vidona et al. (2022) Medical Students Perception of Anatomage: A 3D Interactive (Virtual) Anatomy Dissection Table.https://doi.org/10.1101/2022.04.25.22274178.

- 28.J M Logan, A J Thompson, D W Marshak. (2011) Testing to enhance retention in human anatomy. , Anatomical Sciences Education 4(5), 243-248.

- 29.M A Javaid, Schellekens H, J F Cryan, Toulouse A. (2019) Evaluation of Neuroanatomy Web’ Resources for Undergraduate Education: Educators’ and Students’ Perspectives. , Anatomical Sciences Education 13(2), 237-249.

- 30.J P Purdy. (2012) Why first-year college students select online research resources as their favorite. , First Monday 17(9).

- 31.Nassiri M. (2016) Using 3D Modeling Techniques to Enhance Teaching of Difficult Anatomical Concepts | Request PDF. , ResearchGate 23(4).

- 32.Turney B W. (2007) Anatomy in a Modern Medical Curriculum.” The Annals of the Royal. , College of Surgeons of England 89, 104-107.

- 33.M B Chollet, M F Teaford, E M Garofalo, V B DeLeon. (2009) Student laboratory presentations as a learning tool in anatomy education. , Anatomical Sciences Education 2(6), 260-264.

- 34.R W Clough, R P Lehr. (1996) Testing knowledge of human gross anatomy in medical school: An applied contextual-learning theory method. , Clinical Anatomy 9(4), 263-268.

- 35.T W Huitt, Killins A, W S Brooks. (2014) Team-based learning in the gross anatomy laboratory improves academic performance and students’ attitudes toward teamwork. , Anatomical Sciences Education 8(2), 95-103.

- 36.Friedmann T, Bai J D K, Ahmad S, R M Barbieri, Iqbal S et al. (2019) Outcomes of introducing a mobile interactive learning resource in a large medical school course. , Medical Science Educator 30(1), 25-29.

- 37.Çevikbaş M, Kaiser G. (2022) Promoting Personalized Learning in Flipped Classrooms: A Systematic review study. , Sustainability 14(18), 11393-10.

- 38.Campbell C, Blair H. (2018) Learning the active way. In Advances in educational technologies and instructional design book series ( 21-37.

- 39.Alphonce S, Mwantimwa K. (2019) Students’ use of digital learning resources: diversity, motivations and challenges. , Information and Learning Sciences 120(11), 758-772.